Production postmortemThe random high CPU

A customer complained that every now and then RavenDB is hitting 100% CPU and stays there. They were kind enough to provide a minidump, and I started the investigation.

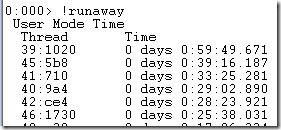

I loaded the minidump to WinDB and started debugging. The first thing you do with high CPU is rung the “!runaway” command, which sorts the threads by how busy they are:

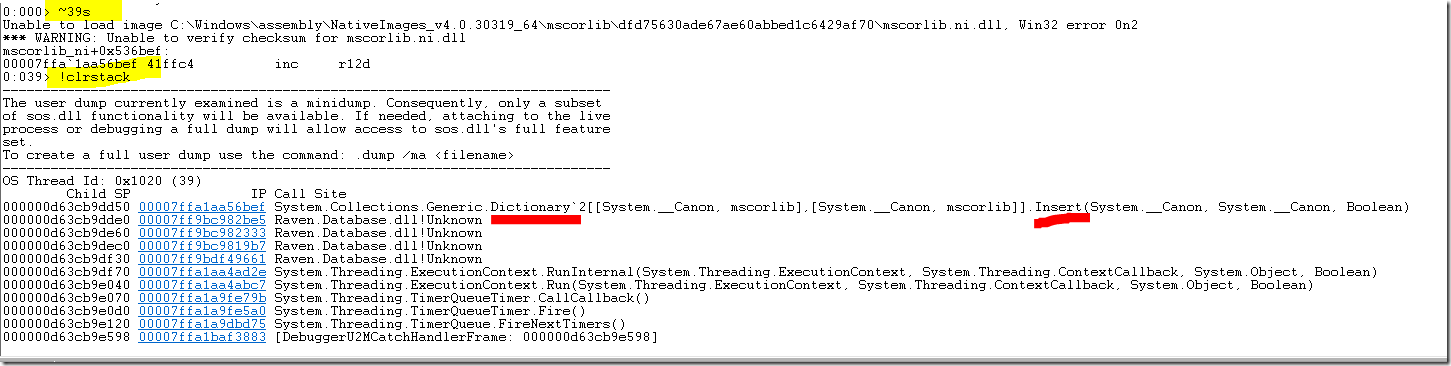

I switched to the first thread (39) and asked for its stack, I highlighted the interesting parts:

This is enough to have a strong suspicion on what is going on. I checked some of the other high CPU threads and my suspicion was confirmed, but even from this single stack trace it is enough.

Pretty much whenever you see a thread doing high CPU within the Dictionary class it means that you are accessing it in a concurrent manner. This is unsafe, and may lead to strange effects. One of them being an infinite loop.

In this case, several threads were caught in this infinite loop. The stack trace also told us where in RavenDB we are doing this, and from there we could confirm that indeed, there is a rare set of circumstances that can cause a timer to fire fast enough that the previous timer didn’t have a chance to complete, and both of these timers will modify the same dictionary, causing the issue.

More posts in "Production postmortem" series:

- (07 Apr 2025) The race condition in the interlock

- (12 Dec 2023) The Spawn of Denial of Service

- (24 Jul 2023) The dog ate my request

- (03 Jul 2023) ENOMEM when trying to free memory

- (27 Jan 2023) The server ate all my memory

- (23 Jan 2023) The big server that couldn’t handle the load

- (16 Jan 2023) The heisenbug server

- (03 Oct 2022) Do you trust this server?

- (15 Sep 2022) The missed indexing reference

- (05 Aug 2022) The allocating query

- (22 Jul 2022) Efficiency all the way to Out of Memory error

- (18 Jul 2022) Broken networks and compressed streams

- (13 Jul 2022) Your math is wrong, recursion doesn’t work this way

- (12 Jul 2022) The data corruption in the node.js stack

- (11 Jul 2022) Out of memory on a clear sky

- (29 Apr 2022) Deduplicating replication speed

- (25 Apr 2022) The network latency and the I/O spikes

- (22 Apr 2022) The encrypted database that was too big to replicate

- (20 Apr 2022) Misleading security and other production snafus

- (03 Jan 2022) An error on the first act will lead to data corruption on the second act…

- (13 Dec 2021) The memory leak that only happened on Linux

- (17 Sep 2021) The Guinness record for page faults & high CPU

- (07 Jan 2021) The file system limitation

- (23 Mar 2020) high CPU when there is little work to be done

- (21 Feb 2020) The self signed certificate that couldn’t

- (31 Jan 2020) The slow slowdown of large systems

- (07 Jun 2019) Printer out of paper and the RavenDB hang

- (18 Feb 2019) This data corruption bug requires 3 simultaneous race conditions

- (25 Dec 2018) Handled errors and the curse of recursive error handling

- (23 Nov 2018) The ARM is killing me

- (22 Feb 2018) The unavailable Linux server

- (06 Dec 2017) data corruption, a view from INSIDE the sausage

- (01 Dec 2017) The random high CPU

- (07 Aug 2017) 30% boost with a single line change

- (04 Aug 2017) The case of 99.99% percentile

- (02 Aug 2017) The lightly loaded trashing server

- (23 Aug 2016) The insidious cost of managed memory

- (05 Feb 2016) A null reference in our abstraction

- (27 Jan 2016) The Razor Suicide

- (13 Nov 2015) The case of the “it is slow on that machine (only)”

- (21 Oct 2015) The case of the slow index rebuild

- (22 Sep 2015) The case of the Unicode Poo

- (03 Sep 2015) The industry at large

- (01 Sep 2015) The case of the lying configuration file

- (31 Aug 2015) The case of the memory eater and high load

- (14 Aug 2015) The case of the man in the middle

- (05 Aug 2015) Reading the errors

- (29 Jul 2015) The evil licensing code

- (23 Jul 2015) The case of the native memory leak

- (16 Jul 2015) The case of the intransigent new database

- (13 Jul 2015) The case of the hung over server

- (09 Jul 2015) The case of the infected cluster

Comments

In my opinion concurrency related bugs that happen rarely ('sometimes') are the most hard to deal with.

Congratulations to find the cause so effectively, it's really amazing.

Usually a little Googling with the method in question together with high CPU will lead you to an article: https://www.codeproject.com/Tips/1130593/Troubleshooting-High-CPU-Usage-of-a-NET-Web-Applic. It was a pretty common issue to see with people dealing with multithreading while forgetting to use locks. The intial Dictionary (.NET 2.0) implementation did cause an exception. But around .NET 4.0 they switched the hash lookup into a way which was susceptible to deadlocking instead.

If you look carefully you will see that only NGenned stack frames are visible in the minidump. The JITed code parts will always be missing.

Curious what pattern you had that allowed potential concurrent access to the dictionary. I'm spoilt in my app that I've managed to avoid having dictionaries that could even potentially be shared by multiple threads. Part of that is design / planning, and part is good fortune.

Is this something you're planning to audit somehow?

Ian,

That piece of code is meant to run in a single threaded fashion.

It is a timer called every 30 minutes or so.

Somehow, it stalled for that long and two concurrent runs happened, which resulted in the race condition.

To prevent this from happening, in our code such timers get an infinite period (i.e. fire once), and in the callback the timer then gets updated to fire again. In code:

This produces a timer which fires after 30mins, and then 30mins after the callback completed, etc.

Daniel,

Yes, we started to use something very similar

Comment preview